Lead Ads Don’t Have to Suck: How to Leverage Them Correctly

Lead ads (aka: lead generation ads) come with a bad reputation. Companies either think they don’t deliver or the leads are fake. Here’s the reality: lead ads are an easy way to grow your leads. And when done correctly, the ROI is high. Not using…

Unlock the Secrets of Organic CPA: The Ultimate Guide to Cost Per Acquisition

Imagine unlocking the secrets to driving business growth by simply understanding the cost of acquiring customers through organic search traffic. Welcome to the world of Organic CPA! This metric is a game-changer in the realm of marketing strategies, offering insights into customer behaviors and paving…

Secrets We’ve Learned from Years Working with Paid Account Reps at Meta, TikTok, LinkedIn and Google

Have you ever wanted to pull back the curtain and see how account reps from the biggest online platforms really do business? After managing over $153 million in combined annual ad spend for our clients, we have a clear insider’s look at the inner workings…

Stop Playing the Lottery with Your Ads: A/B Testing for Meteoric Conversion Rates!

Introduction What is A/B Testing? A/B testing, also known as split testing, is an experimental method used in digital advertising. At its most basic, it involves comparing two versions of the same web page – the ‘A’ and ‘B’ – to see which performs better….

How to Use Geotargeting for Effective Customer Reach

In the rapidly evolving landscape of digital marketing, one strategy has emerged as a game-changer for businesses aiming to target specific audiences: geotargeting. Geotargeting is a powerful tool that allows businesses to deliver different content or advertisements to consumers based on their geographic locations. By…

SaaS Dashboards Improve Marketing Performance

In today’s competitive market, SaaS companies constantly seek ways to gain an edge and stand out. However, success is not guaranteed, with McKinsey & Company reporting that only 50% of SaaS companies achieve multi-billion dollar status despite annual growth rates of 60%. In such a…

How to Take Advantage of the Post-Pandemic Pent-Up Demand for Vacation Rentals and Lodging

After a year of living through a global pandemic, people are traveling to satisfy their travel fever as life begins to return to normal and an increasing portion of the population gets vaccinated. With this return to normal comes a high demand to travel, making…

The Shift to Direct-to-Consumer Accelerates in ‘21

The eCommerce industry has changed the way that we all shop, moving away from brick-and-mortar setups and providing greater access to all types of products. However, in recent years, Direct-to-Consumer (DTC) ) retail has exploded. And for good reason. If you’re still using a middleman…

Google My Business Basics

What is Google My Business? If you’re not already using Google My Business (GMB) for your company, you’ve at least encountered it during your own internet searching. Anytime a search is performed for a specific query, for example “seafood restaurants near me,” then businesses with…

Why You Should Know About Digital Asset Management

What is DAM (Digital Asset Management)? DAM platforms are software that store and organize digital assets. This helps brands efficiently find the most relevant media created. This includes PDFs, photos, videos, audio, virtual reality and other forms of digitals. Benefits Assets are appended with…

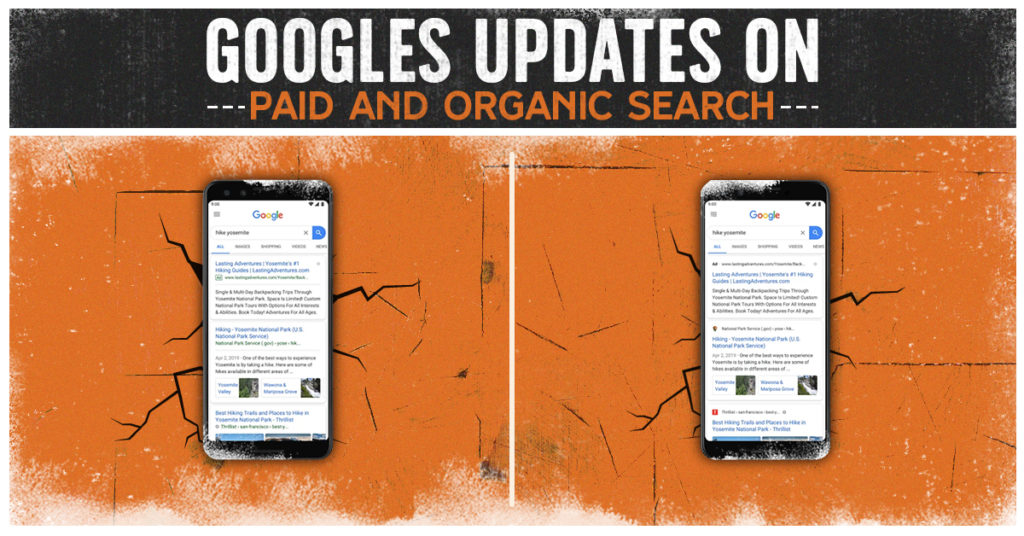

Google’s Updates on Paid and Organic Search

Google is stepping up their design in 2020 for paid and organic search. The left image was the original display for mobile search. The right image is the upcoming display of mobile search. Source: TechCrunch So…What Changed? Paid Search Google Search Ads on desktops have…

Dominate the Market with Google Display Ads 2023: A Step-by-Step Guide

Introduction Welcome aboard! If you’ve landed here, it’s probably because you’re looking to elevate your online marketing strategy with Google Display Ads. Congratulations, because you’ve hit the bullseye! This is your definitive “Google Display Ads guide” to master the realm of online advertising in 2023….

Tell Us Your Goals

Recent Posts

- Google PMax: Get the Most Out of Your Ad Campaigns While Combating Fraud

- Lead Ads Don’t Have to Suck: How to Leverage Them Correctly

- Why More Expensive PPC Ads are Almost Always Worth It

- Unlock the Secrets of Organic CPA: The Ultimate Guide to Cost Per Acquisition

- Secrets We’ve Learned from Years Working with Paid Account Reps at Meta, TikTok, LinkedIn and Google