Unlock the Secrets of Organic CPA: The Ultimate Guide to Cost Per Acquisition

Imagine unlocking the secrets to driving business growth by simply understanding the cost of acquiring customers through organic search traffic. Welcome to the world of Organic CPA! This metric is a game-changer in the realm of marketing strategies, offering insights into customer behaviors and paving…

5 Conversion Rate Tactics That Are Low Cost, High Reward

Ultimately, the goal of any business is to convert consumers into customers—and keep those customers coming back. But, the term “conversion rate” in marketing merely reviews the percent of people who perform a desired action, so depending on your business goals, conversions can be defined…

How To Improve Page & Site Speed

What is Page Speed? If you’re not entirely sure what the term “page speed” refers to, it’s not a difficult concept to grasp. Essentially, page speed simply refers to how quickly a web page loads. Why Does Page Speed Matter? When it comes to…

CRO & SEO: How To Combine Them To Affect Your Bottom Line

Contrary to what some marketers think, CRO (conversion rate optimization) and SEO (search engine optimization) do not actually compete with each other. CRO and SEO go hand-in-hand to drive your website’s traffic and improve conversion rates. When it comes to marketing, conversions refer to any…

What Metrics Should I Track for SEO Success?

One of the most advantageous aspects of online marketing is that there are a myriad of ways to track success. Examining your analytics reveals insights into how effective your marketing efforts are and where there is room for improvement. Knowing which metrics to look for…

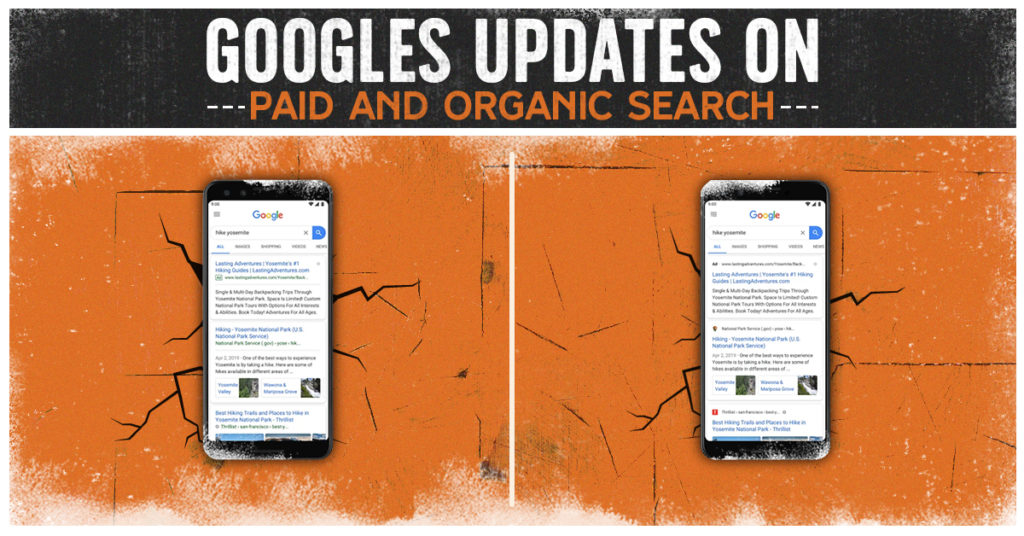

Google’s Updates on Paid and Organic Search

Google is stepping up their design in 2020 for paid and organic search. The left image was the original display for mobile search. The right image is the upcoming display of mobile search. Source: TechCrunch So…What Changed? Paid Search Google Search Ads on desktops have…

Site PageRank & Screaming Frog Visualizations

Lately I’ve been looking at site structures and how PageRank flows through a domain. It’s helped shed a light on some inefficiencies some of our clients’ encounter. That’s why this week I’m taking some time to write about my recent revelations. Plus, it’s my turn…

SEO Impact of Google Assistant | Clicks & Clients Blog

Technology is getting smarter. Google Assistant and Voice Search are becoming more popular, with more than 40% of adults using verbal commands daily. Whether it’s Cortana, Siri, Google, or Amazon, smart speakers are being used more frequently and with increased accuracy than they were a…

Grow Your Business with a Local Link Building Strategy

It’s no secret top ranked sites on search engine results receive more web traffic but driving curious visitors to your home page requires planning and patience. If you aren’t satisfied with your current SEO results, it might be because you’ve underestimated the importance of a…

Ultimate Guide to a Complete SEO Website Audit

The essence of online business success hinges on the capacity of a brand to make its mark in the digital realm. For this, Search Engine Optimization (SEO) remains the critical cog in the mechanism, steering your audience to discover your website and interact with your…

What SEO Metrics Should I Track to Measure Success?

One of the most advantageous aspects of online marketing is that there are a myriad of ways to track success. Examining your analytics reveals insights into how effective your marketing efforts are and where there is room for improvement. Knowing which SEO metrics to look…

[Video] Beginners Guide to SEO

What can African elephants teach you about SEO? Quite a lot, actually. SEO might seem intimidating upon first glance, but the fundamentals are very straightforward. In the video above, we walk you through some of the basics to get you started optimizing your site. Yes, Google’s…

Tell Us Your Goals

Recent Posts

- Google PMax: Get the Most Out of Your Ad Campaigns While Combating Fraud

- Lead Ads Don’t Have to Suck: How to Leverage Them Correctly

- Why More Expensive PPC Ads are Almost Always Worth It

- Unlock the Secrets of Organic CPA: The Ultimate Guide to Cost Per Acquisition

- Secrets We’ve Learned from Years Working with Paid Account Reps at Meta, TikTok, LinkedIn and Google